This is based on code from the following book

The follow blog post walks through what PyTorch’s Optimzers are.e

Link to Jupyter Notebook that this blog post was made from

Pytorch comes with a module of optimizers. We can replace our vanilla gradient descent with many different ones without modifying a lot of code.

%matplotlib inline

import numpy as np

import pandas as pd

import seaborn as sns

from matplotlib import pyplot

import torch

torch.set_printoptions(edgeitems=2, linewidth=75)

Taking our input from the previous notebook and applying our scaling

t_c = torch.tensor([0.5, 14.0, 15.0, 28.0, 11.0,

8.0, 3.0, -4.0, 6.0, 13.0, 21.0])

t_u = torch.tensor([35.7, 55.9, 58.2, 81.9, 56.3, 48.9,

33.9, 21.8, 48.4, 60.4, 68.4])

t_un = 0.1 * t_u

Same model and loss function as before.

def model(t_u, w, b):

return w * t_u + b

def loss_fn(t_p, t_c):

squared_diffs = (t_p - t_c)**2

return squared_diffs.mean()

import torch.optim as optim

dir(optim)

['ASGD',

'Adadelta',

'Adagrad',

'Adam',

'AdamW',

'Adamax',

'LBFGS',

'Optimizer',

'RMSprop',

'Rprop',

'SGD',

'SparseAdam',

'__builtins__',

'__cached__',

'__doc__',

'__file__',

'__loader__',

'__name__',

'__package__',

'__path__',

'__spec__',

'lr_scheduler']

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-5

optimizer = optim.SGD([params], lr=learning_rate)

The values of our parameters are updated when we call step.

The code below forgets to zero out the gradients!

t_p = model(t_u, *params)

loss = loss_fn(t_p, t_c)

loss.backward()

optimizer.step()

params

tensor([ 9.5483e-01, -8.2600e-04], requires_grad=True)

Now we can use this snippet in a loop for training

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-2

optimizer = optim.SGD([params], lr=learning_rate)

t_p = model(t_un, *params)

loss = loss_fn(t_p, t_c)

optimizer.zero_grad() # <1>

loss.backward()

optimizer.step()

params

tensor([1.7761, 0.1064], requires_grad=True)

def training_loop(n_epochs, optimizer, params, t_u, t_c):

for epoch in range(1, n_epochs + 1):

t_p = model(t_u, *params)

loss = loss_fn(t_p, t_c)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if epoch % 500 == 0:

print('Epoch %d, Loss %f' % (epoch, float(loss)))

return params

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-2

optimizer = optim.SGD([params], lr=learning_rate) # <1>

training_loop(

n_epochs = 5000,

optimizer = optimizer,

params = params, # <1>

t_u = t_un,

t_c = t_c)

Epoch 500, Loss 7.860116

Epoch 1000, Loss 3.828538

Epoch 1500, Loss 3.092191

Epoch 2000, Loss 2.957697

Epoch 2500, Loss 2.933134

Epoch 3000, Loss 2.928648

Epoch 3500, Loss 2.927830

Epoch 4000, Loss 2.927679

Epoch 4500, Loss 2.927652

Epoch 5000, Loss 2.927647

tensor([ 5.3671, -17.3012], requires_grad=True)

And we get the same loss

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-1

optimizer = optim.Adam([params], lr=learning_rate) # <1>

training_loop(

n_epochs = 2000,

optimizer = optimizer,

params = params,

t_u = t_u, # <2>

t_c = t_c)

Epoch 500, Loss 7.612903

Epoch 1000, Loss 3.086700

Epoch 1500, Loss 2.928578

Epoch 2000, Loss 2.927646

tensor([ 0.5367, -17.3021], requires_grad=True)

Training and Validation Splits

n_samples = t_u.shape[0]

n_val = int(0.2 * n_samples)

shuffled_indices = torch.randperm(n_samples)

train_indices = shuffled_indices[:-n_val]

val_indices = shuffled_indices[-n_val:]

train_indices, val_indices # <1>

(tensor([ 5, 9, 1, 6, 7, 10, 3, 8, 0]), tensor([2, 4]))

train_t_u = t_u[train_indices]

train_t_c = t_c[train_indices]

val_t_u = t_u[val_indices]

val_t_c = t_c[val_indices]

train_t_un = 0.1 * train_t_u

val_t_un = 0.1 * val_t_u

def training_loop(n_epochs, optimizer, params, train_t_u, val_t_u,

train_t_c, val_t_c, print_periodically=True):

val_loss_each_epoch = []

for epoch in range(1, n_epochs + 1):

train_t_p = model(train_t_u, *params) # <1>

train_loss = loss_fn(train_t_p, train_t_c)

val_t_p = model(val_t_u, *params) # <1>

val_loss = loss_fn(val_t_p, val_t_c)

val_loss_each_epoch.append(val_loss.item())

optimizer.zero_grad()

train_loss.backward() # <2>

optimizer.step()

if print_periodically and (epoch <= 3 or epoch % 500 == 0):

print(f"\tEpoch {epoch}, Training loss {train_loss.item():.4f},"

f" Validation loss {val_loss.item():.4f}")

return *params, train_loss.item(), val_loss.item(), val_loss_each_epoch

params = torch.tensor([1.0, 0.0], requires_grad=True)

learning_rate = 1e-2

optimizer = optim.SGD([params], lr=learning_rate)

training_loop(

n_epochs = 3000,

optimizer = optimizer,

params = params,

train_t_u = train_t_un, # <1>

val_t_u = val_t_un, # <1>

train_t_c = train_t_c,

val_t_c = val_t_c)

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2194

Epoch 500, Training loss 7.1544, Validation loss 2.7312

Epoch 1000, Training loss 3.5517, Validation loss 2.5743

Epoch 1500, Training loss 3.1001, Validation loss 2.5225

Epoch 2000, Training loss 3.0435, Validation loss 2.5046

Epoch 2500, Training loss 3.0364, Validation loss 2.4983

Epoch 3000, Training loss 3.0355, Validation loss 2.4961

(tensor([ 5.3719, -17.2278], requires_grad=True),

3.0354840755462646,

2.4961061477661133)

Searching

results = []

val_loss_over_time_by_name = {} # list of dictionaries to track validation loss for each

optimizer_names = [

'ASGD',

'Adadelta',

'Adagrad',

'Adam',

'AdamW',

'Adamax',

'RMSprop',

'Rprop',

'SGD'

]

learning_rates = [1e-4, 1e-3, 1e-2]

epochs = [500, 5000, 5000]

for optimizer_name in optimizer_names:

for learning_rate in learning_rates:

for number_of_epochs in epochs:

name = f"{optimizer_name} alpha {learning_rate} epochs {number_of_epochs}"

print(name)

params = torch.tensor([1.0, 0.0], requires_grad=True)

optimizer = getattr(optim, optimizer_name)([params], lr=learning_rate)

learned_params = training_loop(

n_epochs = number_of_epochs,

optimizer = optimizer,

params = params,

train_t_u = train_t_un, # <1>

val_t_u = val_t_un, # <1>

train_t_c = train_t_c,

val_t_c = val_t_c,

print_periodically=True

)

beta_1, beta_0, train_loss, val_loss, val_loss_over_time = learned_params

# print(f"\tbeta_1 (weight multipled by measurement in unknown units) {beta_1}")

# print(f"\tbeta_0 (y intercept) {beta_0}")

# print(f"\ttrain_loss {train_loss}")

# print(f"\tval_loss {val_loss}")

results.append(

{

"optimizer_name": optimizer_name,

"learning_rate": learning_rate,

"number_of_epochs": number_of_epochs,

"name": name,

"w": beta_1.item(),

"b": beta_0.item(),

"train_loss": train_loss,

"val_loss": val_loss

}

)

val_loss_over_time_df = pd.DataFrame(val_loss_over_time).reset_index()

val_loss_over_time_df.columns = ["epoch", "val_loss"]

val_loss_over_time_by_name[name] = val_loss_over_time_df

ASGD alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

ASGD alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

Epoch 1000, Training loss 34.4265, Validation loss 3.2288

Epoch 1500, Training loss 33.7817, Validation loss 3.2290

Epoch 2000, Training loss 33.1505, Validation loss 3.2205

Epoch 2500, Training loss 32.5322, Validation loss 3.2116

Epoch 3000, Training loss 31.9267, Validation loss 3.2028

Epoch 3500, Training loss 31.3335, Validation loss 3.1942

Epoch 4000, Training loss 30.7526, Validation loss 3.1856

Epoch 4500, Training loss 30.1836, Validation loss 3.1772

Epoch 5000, Training loss 29.6263, Validation loss 3.1689

ASGD alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

Epoch 1000, Training loss 34.4265, Validation loss 3.2288

Epoch 1500, Training loss 33.7817, Validation loss 3.2290

Epoch 2000, Training loss 33.1505, Validation loss 3.2205

Epoch 2500, Training loss 32.5322, Validation loss 3.2116

Epoch 3000, Training loss 31.9267, Validation loss 3.2028

Epoch 3500, Training loss 31.3335, Validation loss 3.1942

Epoch 4000, Training loss 30.7526, Validation loss 3.1856

Epoch 4500, Training loss 30.1836, Validation loss 3.1772

Epoch 5000, Training loss 29.6263, Validation loss 3.1689

ASGD alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6358, Validation loss 3.1691

ASGD alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6358, Validation loss 3.1691

Epoch 1000, Training loss 24.6529, Validation loss 3.0919

Epoch 1500, Training loss 20.6038, Validation loss 3.0243

Epoch 2000, Training loss 17.3134, Validation loss 2.9650

Epoch 2500, Training loss 14.6397, Validation loss 2.9130

Epoch 3000, Training loss 12.4670, Validation loss 2.8671

Epoch 3500, Training loss 10.7012, Validation loss 2.8266

Epoch 4000, Training loss 9.2661, Validation loss 2.7909

Epoch 4500, Training loss 8.0998, Validation loss 2.7592

Epoch 5000, Training loss 7.1519, Validation loss 2.7311

ASGD alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6358, Validation loss 3.1691

Epoch 1000, Training loss 24.6529, Validation loss 3.0919

Epoch 1500, Training loss 20.6038, Validation loss 3.0243

Epoch 2000, Training loss 17.3134, Validation loss 2.9650

Epoch 2500, Training loss 14.6397, Validation loss 2.9130

Epoch 3000, Training loss 12.4670, Validation loss 2.8671

Epoch 3500, Training loss 10.7012, Validation loss 2.8266

Epoch 4000, Training loss 9.2661, Validation loss 2.7909

Epoch 4500, Training loss 8.0998, Validation loss 2.7592

Epoch 5000, Training loss 7.1519, Validation loss 2.7311

ASGD alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2195

Epoch 500, Training loss 7.1592, Validation loss 2.7314

ASGD alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2195

Epoch 500, Training loss 7.1592, Validation loss 2.7314

Epoch 1000, Training loss 3.5551, Validation loss 2.5745

Epoch 1500, Training loss 3.1015, Validation loss 2.5228

Epoch 2000, Training loss 3.0440, Validation loss 2.5049

Epoch 2500, Training loss 3.0366, Validation loss 2.4986

Epoch 3000, Training loss 3.0356, Validation loss 2.4964

Epoch 3500, Training loss 3.0354, Validation loss 2.4956

Epoch 4000, Training loss 3.0354, Validation loss 2.4953

Epoch 4500, Training loss 3.0354, Validation loss 2.4952

Epoch 5000, Training loss 3.0354, Validation loss 2.4952

ASGD alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2195

Epoch 500, Training loss 7.1592, Validation loss 2.7314

Epoch 1000, Training loss 3.5551, Validation loss 2.5745

Epoch 1500, Training loss 3.1015, Validation loss 2.5228

Epoch 2000, Training loss 3.0440, Validation loss 2.5049

Epoch 2500, Training loss 3.0366, Validation loss 2.4986

Epoch 3000, Training loss 3.0356, Validation loss 2.4964

Epoch 3500, Training loss 3.0354, Validation loss 2.4956

Epoch 4000, Training loss 3.0354, Validation loss 2.4953

Epoch 4500, Training loss 3.0354, Validation loss 2.4952

Epoch 5000, Training loss 3.0354, Validation loss 2.4952

Adadelta alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6554, Validation loss 56.5546

Epoch 3, Training loss 85.6553, Validation loss 56.5546

Epoch 500, Training loss 85.6300, Validation loss 56.5257

Adadelta alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6554, Validation loss 56.5546

Epoch 3, Training loss 85.6553, Validation loss 56.5546

Epoch 500, Training loss 85.6300, Validation loss 56.5257

Epoch 1000, Training loss 85.5900, Validation loss 56.4800

Epoch 1500, Training loss 85.5400, Validation loss 56.4229

Epoch 2000, Training loss 85.4813, Validation loss 56.3559

Epoch 2500, Training loss 85.4156, Validation loss 56.2810

Epoch 3000, Training loss 85.3429, Validation loss 56.1979

Epoch 3500, Training loss 85.2643, Validation loss 56.1083

Epoch 4000, Training loss 85.1803, Validation loss 56.0124

Epoch 4500, Training loss 85.0912, Validation loss 55.9107

Epoch 5000, Training loss 84.9973, Validation loss 55.8035

Adadelta alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6554, Validation loss 56.5546

Epoch 3, Training loss 85.6553, Validation loss 56.5546

Epoch 500, Training loss 85.6300, Validation loss 56.5257

Epoch 1000, Training loss 85.5900, Validation loss 56.4800

Epoch 1500, Training loss 85.5400, Validation loss 56.4229

Epoch 2000, Training loss 85.4813, Validation loss 56.3559

Epoch 2500, Training loss 85.4156, Validation loss 56.2810

Epoch 3000, Training loss 85.3429, Validation loss 56.1979

Epoch 3500, Training loss 85.2643, Validation loss 56.1083

Epoch 4000, Training loss 85.1803, Validation loss 56.0124

Epoch 4500, Training loss 85.0912, Validation loss 55.9107

Epoch 5000, Training loss 84.9973, Validation loss 55.8035

Adadelta alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6551, Validation loss 56.5543

Epoch 3, Training loss 85.6548, Validation loss 56.5540

Epoch 500, Training loss 85.4015, Validation loss 56.2648

Adadelta alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6551, Validation loss 56.5543

Epoch 3, Training loss 85.6548, Validation loss 56.5540

Epoch 500, Training loss 85.4015, Validation loss 56.2648

Epoch 1000, Training loss 85.0055, Validation loss 55.8128

Epoch 1500, Training loss 84.5108, Validation loss 55.2482

Epoch 2000, Training loss 83.9376, Validation loss 54.5939

Epoch 2500, Training loss 83.2986, Validation loss 53.8647

Epoch 3000, Training loss 82.6034, Validation loss 53.0712

Epoch 3500, Training loss 81.8594, Validation loss 52.2221

Epoch 4000, Training loss 81.0730, Validation loss 51.3247

Epoch 4500, Training loss 80.2495, Validation loss 50.3849

Epoch 5000, Training loss 79.3935, Validation loss 49.4082

Adadelta alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6551, Validation loss 56.5543

Epoch 3, Training loss 85.6548, Validation loss 56.5540

Epoch 500, Training loss 85.4015, Validation loss 56.2648

Epoch 1000, Training loss 85.0055, Validation loss 55.8128

Epoch 1500, Training loss 84.5108, Validation loss 55.2482

Epoch 2000, Training loss 83.9376, Validation loss 54.5939

Epoch 2500, Training loss 83.2986, Validation loss 53.8647

Epoch 3000, Training loss 82.6034, Validation loss 53.0712

Epoch 3500, Training loss 81.8594, Validation loss 52.2221

Epoch 4000, Training loss 81.0730, Validation loss 51.3247

Epoch 4500, Training loss 80.2495, Validation loss 50.3849

Epoch 5000, Training loss 79.3935, Validation loss 49.4082

Adadelta alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6527, Validation loss 56.5515

Epoch 3, Training loss 85.6499, Validation loss 56.5484

Epoch 500, Training loss 83.1666, Validation loss 53.7141

Adadelta alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6527, Validation loss 56.5515

Epoch 3, Training loss 85.6499, Validation loss 56.5484

Epoch 500, Training loss 83.1666, Validation loss 53.7141

Epoch 1000, Training loss 79.4724, Validation loss 49.4983

Epoch 1500, Training loss 75.1670, Validation loss 44.5863

Epoch 2000, Training loss 70.5870, Validation loss 39.3633

Epoch 2500, Training loss 65.9726, Validation loss 34.1044

Epoch 3000, Training loss 61.5033, Validation loss 29.0160

Epoch 3500, Training loss 57.3126, Validation loss 24.2519

Epoch 4000, Training loss 53.4949, Validation loss 19.9223

Epoch 4500, Training loss 50.1112, Validation loss 16.0998

Epoch 5000, Training loss 47.1933, Validation loss 12.8244

Adadelta alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6527, Validation loss 56.5515

Epoch 3, Training loss 85.6499, Validation loss 56.5484

Epoch 500, Training loss 83.1666, Validation loss 53.7141

Epoch 1000, Training loss 79.4724, Validation loss 49.4983

Epoch 1500, Training loss 75.1670, Validation loss 44.5863

Epoch 2000, Training loss 70.5870, Validation loss 39.3633

Epoch 2500, Training loss 65.9726, Validation loss 34.1044

Epoch 3000, Training loss 61.5033, Validation loss 29.0160

Epoch 3500, Training loss 57.3126, Validation loss 24.2519

Epoch 4000, Training loss 53.4949, Validation loss 19.9223

Epoch 4500, Training loss 50.1112, Validation loss 16.0998

Epoch 5000, Training loss 47.1933, Validation loss 12.8244

Adagrad alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6407, Validation loss 56.5379

Epoch 500, Training loss 85.2844, Validation loss 56.1311

Adagrad alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6407, Validation loss 56.5379

Epoch 500, Training loss 85.2844, Validation loss 56.1311

Epoch 1000, Training loss 85.1259, Validation loss 55.9503

Epoch 1500, Training loss 85.0047, Validation loss 55.8119

Epoch 2000, Training loss 84.9026, Validation loss 55.6954

Epoch 2500, Training loss 84.8128, Validation loss 55.5929

Epoch 3000, Training loss 84.7318, Validation loss 55.5004

Epoch 3500, Training loss 84.6574, Validation loss 55.4155

Epoch 4000, Training loss 84.5881, Validation loss 55.3364

Epoch 4500, Training loss 84.5226, Validation loss 55.2617

Epoch 5000, Training loss 84.4618, Validation loss 55.1923

Adagrad alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6407, Validation loss 56.5379

Epoch 500, Training loss 85.2844, Validation loss 56.1311

Epoch 1000, Training loss 85.1259, Validation loss 55.9503

Epoch 1500, Training loss 85.0047, Validation loss 55.8119

Epoch 2000, Training loss 84.9026, Validation loss 55.6954

Epoch 2500, Training loss 84.8128, Validation loss 55.5929

Epoch 3000, Training loss 84.7318, Validation loss 55.5004

Epoch 3500, Training loss 84.6574, Validation loss 55.4155

Epoch 4000, Training loss 84.5881, Validation loss 55.3364

Epoch 4500, Training loss 84.5226, Validation loss 55.2617

Epoch 5000, Training loss 84.4618, Validation loss 55.1923

Adagrad alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5086, Validation loss 56.3872

Epoch 500, Training loss 82.0349, Validation loss 52.4222

Adagrad alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5086, Validation loss 56.3872

Epoch 500, Training loss 82.0349, Validation loss 52.4222

Epoch 1000, Training loss 80.5431, Validation loss 50.7195

Epoch 1500, Training loss 79.4224, Validation loss 49.4405

Epoch 2000, Training loss 78.4938, Validation loss 48.3806

Epoch 2500, Training loss 77.6877, Validation loss 47.4607

Epoch 3000, Training loss 76.9687, Validation loss 46.6402

Epoch 3500, Training loss 76.3155, Validation loss 45.8948

Epoch 4000, Training loss 75.7145, Validation loss 45.2089

Epoch 4500, Training loss 75.1561, Validation loss 44.5717

Epoch 5000, Training loss 74.6332, Validation loss 43.9751

Adagrad alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5086, Validation loss 56.3872

Epoch 500, Training loss 82.0349, Validation loss 52.4222

Epoch 1000, Training loss 80.5431, Validation loss 50.7195

Epoch 1500, Training loss 79.4224, Validation loss 49.4405

Epoch 2000, Training loss 78.4938, Validation loss 48.3806

Epoch 2500, Training loss 77.6877, Validation loss 47.4607

Epoch 3000, Training loss 76.9687, Validation loss 46.6402

Epoch 3500, Training loss 76.3155, Validation loss 45.8948

Epoch 4000, Training loss 75.7145, Validation loss 45.2089

Epoch 4500, Training loss 75.1561, Validation loss 44.5717

Epoch 5000, Training loss 74.6332, Validation loss 43.9751

Adagrad alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.2010, Validation loss 54.8945

Epoch 500, Training loss 57.6999, Validation loss 24.6808

Adagrad alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.2010, Validation loss 54.8945

Epoch 500, Training loss 57.6999, Validation loss 24.6808

Epoch 1000, Training loss 50.5468, Validation loss 16.5840

Epoch 1500, Training loss 46.5815, Validation loss 12.1548

Epoch 2000, Training loss 44.0707, Validation loss 9.4133

Epoch 2500, Training loss 42.3649, Validation loss 7.6167

Epoch 3000, Training loss 41.1456, Validation loss 6.3996

Epoch 3500, Training loss 40.2350, Validation loss 5.5561

Epoch 4000, Training loss 39.5263, Validation loss 4.9612

Epoch 4500, Training loss 38.9526, Validation loss 4.5352

Epoch 5000, Training loss 38.4714, Validation loss 4.2256

Adagrad alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.2010, Validation loss 54.8945

Epoch 500, Training loss 57.6999, Validation loss 24.6808

Epoch 1000, Training loss 50.5468, Validation loss 16.5840

Epoch 1500, Training loss 46.5815, Validation loss 12.1548

Epoch 2000, Training loss 44.0707, Validation loss 9.4133

Epoch 2500, Training loss 42.3649, Validation loss 7.6167

Epoch 3000, Training loss 41.1456, Validation loss 6.3996

Epoch 3500, Training loss 40.2350, Validation loss 5.5561

Epoch 4000, Training loss 39.5263, Validation loss 4.9612

Epoch 4500, Training loss 38.9526, Validation loss 4.5352

Epoch 5000, Training loss 38.4714, Validation loss 4.2256

Adam alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.5026, Validation loss 51.8148

Adam alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.5026, Validation loss 51.8148

Epoch 1000, Training loss 77.6139, Validation loss 47.3770

Epoch 1500, Training loss 73.9759, Validation loss 43.2260

Epoch 2000, Training loss 70.5698, Validation loss 39.3404

Epoch 2500, Training loss 67.3807, Validation loss 35.7033

Epoch 3000, Training loss 64.3965, Validation loss 32.3008

Epoch 3500, Training loss 61.6076, Validation loss 29.1220

Epoch 4000, Training loss 59.0063, Validation loss 26.1586

Epoch 4500, Training loss 56.5864, Validation loss 23.4038

Epoch 5000, Training loss 54.3430, Validation loss 20.8522

Adam alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.5026, Validation loss 51.8148

Epoch 1000, Training loss 77.6139, Validation loss 47.3770

Epoch 1500, Training loss 73.9759, Validation loss 43.2260

Epoch 2000, Training loss 70.5698, Validation loss 39.3404

Epoch 2500, Training loss 67.3807, Validation loss 35.7033

Epoch 3000, Training loss 64.3965, Validation loss 32.3008

Epoch 3500, Training loss 61.6076, Validation loss 29.1220

Epoch 4000, Training loss 59.0063, Validation loss 26.1586

Epoch 4500, Training loss 56.5864, Validation loss 23.4038

Epoch 5000, Training loss 54.3430, Validation loss 20.8522

Adam alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4835, Validation loss 56.3584

Epoch 500, Training loss 55.2777, Validation loss 21.9455

Adam alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4835, Validation loss 56.3584

Epoch 500, Training loss 55.2777, Validation loss 21.9455

Epoch 1000, Training loss 42.8083, Validation loss 8.1165

Epoch 1500, Training loss 38.4026, Validation loss 4.1677

Epoch 2000, Training loss 36.1285, Validation loss 3.3609

Epoch 2500, Training loss 34.1304, Validation loss 3.1924

Epoch 3000, Training loss 32.2028, Validation loss 3.1374

Epoch 3500, Training loss 30.3603, Validation loss 3.1119

Epoch 4000, Training loss 28.6105, Validation loss 3.0970

Epoch 4500, Training loss 26.9507, Validation loss 3.0854

Epoch 5000, Training loss 25.3745, Validation loss 3.0739

Adam alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4835, Validation loss 56.3584

Epoch 500, Training loss 55.2777, Validation loss 21.9455

Epoch 1000, Training loss 42.8083, Validation loss 8.1165

Epoch 1500, Training loss 38.4026, Validation loss 4.1677

Epoch 2000, Training loss 36.1285, Validation loss 3.3609

Epoch 2500, Training loss 34.1304, Validation loss 3.1924

Epoch 3000, Training loss 32.2028, Validation loss 3.1374

Epoch 3500, Training loss 30.3603, Validation loss 3.1119

Epoch 4000, Training loss 28.6105, Validation loss 3.0970

Epoch 4500, Training loss 26.9507, Validation loss 3.0854

Epoch 5000, Training loss 25.3745, Validation loss 3.0739

Adam alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9507, Validation loss 54.6088

Epoch 500, Training loss 27.8058, Validation loss 3.0615

Adam alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9507, Validation loss 54.6088

Epoch 500, Training loss 27.8058, Validation loss 3.0615

Epoch 1000, Training loss 16.2508, Validation loss 2.8700

Epoch 1500, Training loss 9.1708, Validation loss 2.7344

Epoch 2000, Training loss 5.4193, Validation loss 2.6365

Epoch 2500, Training loss 3.7619, Validation loss 2.5698

Epoch 3000, Training loss 3.1943, Validation loss 2.5288

Epoch 3500, Training loss 3.0576, Validation loss 2.5073

Epoch 4000, Training loss 3.0371, Validation loss 2.4983

Epoch 4500, Training loss 3.0354, Validation loss 2.4956

Epoch 5000, Training loss 3.0354, Validation loss 2.4950

Adam alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9507, Validation loss 54.6088

Epoch 500, Training loss 27.8058, Validation loss 3.0615

Epoch 1000, Training loss 16.2508, Validation loss 2.8700

Epoch 1500, Training loss 9.1708, Validation loss 2.7344

Epoch 2000, Training loss 5.4193, Validation loss 2.6365

Epoch 2500, Training loss 3.7619, Validation loss 2.5698

Epoch 3000, Training loss 3.1943, Validation loss 2.5288

Epoch 3500, Training loss 3.0576, Validation loss 2.5073

Epoch 4000, Training loss 3.0371, Validation loss 2.4983

Epoch 4500, Training loss 3.0354, Validation loss 2.4956

Epoch 5000, Training loss 3.0354, Validation loss 2.4950

AdamW alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6469, Validation loss 56.5449

Epoch 3, Training loss 85.6383, Validation loss 56.5352

Epoch 500, Training loss 81.5413, Validation loss 51.8572

AdamW alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6469, Validation loss 56.5449

Epoch 3, Training loss 85.6383, Validation loss 56.5352

Epoch 500, Training loss 81.5413, Validation loss 51.8572

Epoch 1000, Training loss 77.6875, Validation loss 47.4576

Epoch 1500, Training loss 74.0827, Validation loss 43.3427

Epoch 2000, Training loss 70.7076, Validation loss 39.4906

Epoch 2500, Training loss 67.5455, Validation loss 35.8826

Epoch 3000, Training loss 64.5878, Validation loss 32.5084

Epoch 3500, Training loss 61.8212, Validation loss 29.3532

Epoch 4000, Training loss 59.2393, Validation loss 26.4101

Epoch 4500, Training loss 56.8366, Validation loss 23.6728

Epoch 5000, Training loss 54.6060, Validation loss 21.1337

AdamW alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6469, Validation loss 56.5449

Epoch 3, Training loss 85.6383, Validation loss 56.5352

Epoch 500, Training loss 81.5413, Validation loss 51.8572

Epoch 1000, Training loss 77.6875, Validation loss 47.4576

Epoch 1500, Training loss 74.0827, Validation loss 43.3427

Epoch 2000, Training loss 70.7076, Validation loss 39.4906

Epoch 2500, Training loss 67.5455, Validation loss 35.8826

Epoch 3000, Training loss 64.5878, Validation loss 32.5084

Epoch 3500, Training loss 61.8212, Validation loss 29.3532

Epoch 4000, Training loss 59.2393, Validation loss 26.4101

Epoch 4500, Training loss 56.8366, Validation loss 23.6728

Epoch 5000, Training loss 54.6060, Validation loss 21.1337

AdamW alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5701, Validation loss 56.4573

Epoch 3, Training loss 85.4850, Validation loss 56.3601

Epoch 500, Training loss 55.5340, Validation loss 22.2194

AdamW alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5701, Validation loss 56.4573

Epoch 3, Training loss 85.4850, Validation loss 56.3601

Epoch 500, Training loss 55.5340, Validation loss 22.2194

Epoch 1000, Training loss 43.0774, Validation loss 8.3753

Epoch 1500, Training loss 38.6096, Validation loss 4.3015

Epoch 2000, Training loss 36.3186, Validation loss 3.4165

Epoch 2500, Training loss 34.3219, Validation loss 3.2153

Epoch 3000, Training loss 32.3932, Validation loss 3.1444

Epoch 3500, Training loss 30.5497, Validation loss 3.1106

Epoch 4000, Training loss 28.8042, Validation loss 3.0922

Epoch 4500, Training loss 27.1559, Validation loss 3.0798

Epoch 5000, Training loss 25.5986, Validation loss 3.0690

AdamW alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5701, Validation loss 56.4573

Epoch 3, Training loss 85.4850, Validation loss 56.3601

Epoch 500, Training loss 55.5340, Validation loss 22.2194

Epoch 1000, Training loss 43.0774, Validation loss 8.3753

Epoch 1500, Training loss 38.6096, Validation loss 4.3015

Epoch 2000, Training loss 36.3186, Validation loss 3.4165

Epoch 2500, Training loss 34.3219, Validation loss 3.2153

Epoch 3000, Training loss 32.3932, Validation loss 3.1444

Epoch 3500, Training loss 30.5497, Validation loss 3.1106

Epoch 4000, Training loss 28.8042, Validation loss 3.0922

Epoch 4500, Training loss 27.1559, Validation loss 3.0798

Epoch 5000, Training loss 25.5986, Validation loss 3.0690

AdamW alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.8065, Validation loss 55.5854

Epoch 3, Training loss 83.9657, Validation loss 54.6253

Epoch 500, Training loss 28.1584, Validation loss 3.0684

AdamW alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.8065, Validation loss 55.5854

Epoch 3, Training loss 83.9657, Validation loss 54.6253

Epoch 500, Training loss 28.1584, Validation loss 3.0684

Epoch 1000, Training loss 16.9523, Validation loss 2.8717

Epoch 1500, Training loss 10.1140, Validation loss 2.7438

Epoch 2000, Training loss 6.3451, Validation loss 2.6549

Epoch 2500, Training loss 4.4604, Validation loss 2.5943

Epoch 3000, Training loss 3.6122, Validation loss 2.5549

Epoch 3500, Training loss 3.2670, Validation loss 2.5310

Epoch 4000, Training loss 3.1350, Validation loss 2.5175

Epoch 4500, Training loss 3.0837, Validation loss 2.5101

Epoch 5000, Training loss 3.0614, Validation loss 2.5059

AdamW alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.8065, Validation loss 55.5854

Epoch 3, Training loss 83.9657, Validation loss 54.6253

Epoch 500, Training loss 28.1584, Validation loss 3.0684

Epoch 1000, Training loss 16.9523, Validation loss 2.8717

Epoch 1500, Training loss 10.1140, Validation loss 2.7438

Epoch 2000, Training loss 6.3451, Validation loss 2.6549

Epoch 2500, Training loss 4.4604, Validation loss 2.5943

Epoch 3000, Training loss 3.6122, Validation loss 2.5549

Epoch 3500, Training loss 3.2670, Validation loss 2.5310

Epoch 4000, Training loss 3.1350, Validation loss 2.5175

Epoch 4500, Training loss 3.0837, Validation loss 2.5101

Epoch 5000, Training loss 3.0614, Validation loss 2.5059

Adamax alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.4559, Validation loss 51.7608

Adamax alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.4559, Validation loss 51.7608

Epoch 1000, Training loss 77.4467, Validation loss 47.1834

Epoch 1500, Training loss 73.6369, Validation loss 42.8327

Epoch 2000, Training loss 70.0265, Validation loss 38.7087

Epoch 2500, Training loss 66.6155, Validation loss 34.8115

Epoch 3000, Training loss 63.4039, Validation loss 31.1410

Epoch 3500, Training loss 60.3916, Validation loss 27.6971

Epoch 4000, Training loss 57.5788, Validation loss 24.4801

Epoch 4500, Training loss 54.9653, Validation loss 21.4897

Epoch 5000, Training loss 52.5513, Validation loss 18.7261

Adamax alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6382, Validation loss 56.5350

Epoch 500, Training loss 81.4559, Validation loss 51.7608

Epoch 1000, Training loss 77.4467, Validation loss 47.1834

Epoch 1500, Training loss 73.6369, Validation loss 42.8327

Epoch 2000, Training loss 70.0265, Validation loss 38.7087

Epoch 2500, Training loss 66.6155, Validation loss 34.8115

Epoch 3000, Training loss 63.4039, Validation loss 31.1410

Epoch 3500, Training loss 60.3916, Validation loss 27.6971

Epoch 4000, Training loss 57.5788, Validation loss 24.4801

Epoch 4500, Training loss 54.9653, Validation loss 21.4897

Epoch 5000, Training loss 52.5513, Validation loss 18.7261

Adamax alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4834, Validation loss 56.3584

Epoch 500, Training loss 53.0044, Validation loss 19.4455

Adamax alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4834, Validation loss 56.3584

Epoch 500, Training loss 53.0044, Validation loss 19.4455

Epoch 1000, Training loss 40.1065, Validation loss 5.5895

Epoch 1500, Training loss 36.3288, Validation loss 3.3336

Epoch 2000, Training loss 34.1454, Validation loss 3.1726

Epoch 2500, Training loss 32.3056, Validation loss 3.1781

Epoch 3000, Training loss 30.5537, Validation loss 3.1759

Epoch 3500, Training loss 28.8615, Validation loss 3.1658

Epoch 4000, Training loss 27.2246, Validation loss 3.1503

Epoch 4500, Training loss 25.6417, Validation loss 3.1314

Epoch 5000, Training loss 24.1124, Validation loss 3.1102

Adamax alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4834, Validation loss 56.3584

Epoch 500, Training loss 53.0044, Validation loss 19.4455

Epoch 1000, Training loss 40.1065, Validation loss 5.5895

Epoch 1500, Training loss 36.3288, Validation loss 3.3336

Epoch 2000, Training loss 34.1454, Validation loss 3.1726

Epoch 2500, Training loss 32.3056, Validation loss 3.1781

Epoch 3000, Training loss 30.5537, Validation loss 3.1759

Epoch 3500, Training loss 28.8615, Validation loss 3.1658

Epoch 4000, Training loss 27.2246, Validation loss 3.1503

Epoch 4500, Training loss 25.6417, Validation loss 3.1314

Epoch 5000, Training loss 24.1124, Validation loss 3.1102

Adamax alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9537, Validation loss 54.6123

Epoch 500, Training loss 32.9020, Validation loss 3.1556

Adamax alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9537, Validation loss 54.6123

Epoch 500, Training loss 32.9020, Validation loss 3.1556

Epoch 1000, Training loss 23.5443, Validation loss 2.9822

Epoch 1500, Training loss 14.0532, Validation loss 2.7960

Epoch 2000, Training loss 6.9738, Validation loss 2.6378

Epoch 2500, Training loss 3.7493, Validation loss 2.5423

Epoch 3000, Training loss 3.0767, Validation loss 2.5045

Epoch 3500, Training loss 3.0357, Validation loss 2.4957

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Adamax alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.9537, Validation loss 54.6123

Epoch 500, Training loss 32.9020, Validation loss 3.1556

Epoch 1000, Training loss 23.5443, Validation loss 2.9822

Epoch 1500, Training loss 14.0532, Validation loss 2.7960

Epoch 2000, Training loss 6.9738, Validation loss 2.6378

Epoch 2500, Training loss 3.7493, Validation loss 2.5423

Epoch 3000, Training loss 3.0767, Validation loss 2.5045

Epoch 3500, Training loss 3.0357, Validation loss 2.4957

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

RMSprop alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5085, Validation loss 56.3870

Epoch 500, Training loss 80.4863, Validation loss 50.6544

RMSprop alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5085, Validation loss 56.3870

Epoch 500, Training loss 80.4863, Validation loss 50.6544

Epoch 1000, Training loss 76.5649, Validation loss 46.1777

Epoch 1500, Training loss 72.8422, Validation loss 41.9271

Epoch 2000, Training loss 69.3178, Validation loss 37.9020

Epoch 2500, Training loss 65.9883, Validation loss 34.0989

Epoch 3000, Training loss 62.8568, Validation loss 30.5211

Epoch 3500, Training loss 59.9206, Validation loss 27.1655

Epoch 4000, Training loss 57.1805, Validation loss 24.0335

Epoch 4500, Training loss 54.6366, Validation loss 21.1248

Epoch 5000, Training loss 52.2880, Validation loss 18.4388

RMSprop alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.5085, Validation loss 56.3870

Epoch 500, Training loss 80.4863, Validation loss 50.6544

Epoch 1000, Training loss 76.5649, Validation loss 46.1777

Epoch 1500, Training loss 72.8422, Validation loss 41.9271

Epoch 2000, Training loss 69.3178, Validation loss 37.9020

Epoch 2500, Training loss 65.9883, Validation loss 34.0989

Epoch 3000, Training loss 62.8568, Validation loss 30.5211

Epoch 3500, Training loss 59.9206, Validation loss 27.1655

Epoch 4000, Training loss 57.1805, Validation loss 24.0335

Epoch 4500, Training loss 54.6366, Validation loss 21.1248

Epoch 5000, Training loss 52.2880, Validation loss 18.4388

RMSprop alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.1994, Validation loss 54.8928

Epoch 500, Training loss 49.4834, Validation loss 15.3363

RMSprop alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.1994, Validation loss 54.8928

Epoch 500, Training loss 49.4834, Validation loss 15.3363

Epoch 1000, Training loss 38.8005, Validation loss 4.5215

Epoch 1500, Training loss 34.7420, Validation loss 3.1943

Epoch 2000, Training loss 32.8426, Validation loss 3.2466

Epoch 2500, Training loss 31.0912, Validation loss 3.2254

Epoch 3000, Training loss 29.3959, Validation loss 3.2013

Epoch 3500, Training loss 27.7538, Validation loss 3.1766

Epoch 4000, Training loss 26.1648, Validation loss 3.1515

Epoch 4500, Training loss 24.6290, Validation loss 3.1267

Epoch 5000, Training loss 23.1463, Validation loss 3.1020

RMSprop alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 84.1994, Validation loss 54.8928

Epoch 500, Training loss 49.4834, Validation loss 15.3363

Epoch 1000, Training loss 38.8005, Validation loss 4.5215

Epoch 1500, Training loss 34.7420, Validation loss 3.1943

Epoch 2000, Training loss 32.8426, Validation loss 3.2466

Epoch 2500, Training loss 31.0912, Validation loss 3.2254

Epoch 3000, Training loss 29.3959, Validation loss 3.2013

Epoch 3500, Training loss 27.7538, Validation loss 3.1766

Epoch 4000, Training loss 26.1648, Validation loss 3.1515

Epoch 4500, Training loss 24.6290, Validation loss 3.1267

Epoch 5000, Training loss 23.1463, Validation loss 3.1020

RMSprop alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 77.4490, Validation loss 47.1859

Epoch 3, Training loss 72.3669, Validation loss 41.3872

Epoch 500, Training loss 21.2608, Validation loss 3.0369

RMSprop alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 77.4490, Validation loss 47.1859

Epoch 3, Training loss 72.3669, Validation loss 41.3872

Epoch 500, Training loss 21.2608, Validation loss 3.0369

Epoch 1000, Training loss 10.6040, Validation loss 2.8581

Epoch 1500, Training loss 4.8201, Validation loss 2.6892

Epoch 2000, Training loss 3.0810, Validation loss 2.5482

Epoch 2500, Training loss 3.0363, Validation loss 2.5299

Epoch 3000, Training loss 3.0364, Validation loss 2.5300

Epoch 3500, Training loss 3.0364, Validation loss 2.5300

Epoch 4000, Training loss 3.0364, Validation loss 2.5300

Epoch 4500, Training loss 3.0364, Validation loss 2.5300

Epoch 5000, Training loss 3.0364, Validation loss 2.5300

RMSprop alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 77.4490, Validation loss 47.1859

Epoch 3, Training loss 72.3669, Validation loss 41.3872

Epoch 500, Training loss 21.2608, Validation loss 3.0369

Epoch 1000, Training loss 10.6040, Validation loss 2.8581

Epoch 1500, Training loss 4.8201, Validation loss 2.6892

Epoch 2000, Training loss 3.0810, Validation loss 2.5482

Epoch 2500, Training loss 3.0363, Validation loss 2.5299

Epoch 3000, Training loss 3.0364, Validation loss 2.5300

Epoch 3500, Training loss 3.0364, Validation loss 2.5300

Epoch 4000, Training loss 3.0364, Validation loss 2.5300

Epoch 4500, Training loss 3.0364, Validation loss 2.5300

Epoch 5000, Training loss 3.0364, Validation loss 2.5300

Rprop alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6364, Validation loss 56.5330

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6364, Validation loss 56.5330

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.6468, Validation loss 56.5448

Epoch 3, Training loss 85.6364, Validation loss 56.5330

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4663, Validation loss 56.3388

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4663, Validation loss 56.3388

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.5694, Validation loss 56.4565

Epoch 3, Training loss 85.4663, Validation loss 56.3388

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.7817, Validation loss 54.4159

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.7817, Validation loss 54.4159

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

Rprop alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 84.7989, Validation loss 55.5771

Epoch 3, Training loss 83.7817, Validation loss 54.4159

Epoch 500, Training loss 3.0354, Validation loss 2.4949

Epoch 1000, Training loss 3.0354, Validation loss 2.4949

Epoch 1500, Training loss 3.0354, Validation loss 2.4949

Epoch 2000, Training loss 3.0354, Validation loss 2.4949

Epoch 2500, Training loss 3.0354, Validation loss 2.4949

Epoch 3000, Training loss 3.0354, Validation loss 2.4949

Epoch 3500, Training loss 3.0354, Validation loss 2.4949

Epoch 4000, Training loss 3.0354, Validation loss 2.4949

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

SGD alpha 0.0001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

SGD alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

Epoch 1000, Training loss 34.4265, Validation loss 3.2288

Epoch 1500, Training loss 33.7817, Validation loss 3.2290

Epoch 2000, Training loss 33.1505, Validation loss 3.2205

Epoch 2500, Training loss 32.5322, Validation loss 3.2116

Epoch 3000, Training loss 31.9266, Validation loss 3.2028

Epoch 3500, Training loss 31.3335, Validation loss 3.1942

Epoch 4000, Training loss 30.7525, Validation loss 3.1856

Epoch 4500, Training loss 30.1835, Validation loss 3.1772

Epoch 5000, Training loss 29.6262, Validation loss 3.1689

SGD alpha 0.0001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 85.0659, Validation loss 55.9043

Epoch 3, Training loss 84.4833, Validation loss 55.2618

Epoch 500, Training loss 35.2186, Validation loss 3.2051

Epoch 1000, Training loss 34.4265, Validation loss 3.2288

Epoch 1500, Training loss 33.7817, Validation loss 3.2290

Epoch 2000, Training loss 33.1505, Validation loss 3.2205

Epoch 2500, Training loss 32.5322, Validation loss 3.2116

Epoch 3000, Training loss 31.9266, Validation loss 3.2028

Epoch 3500, Training loss 31.3335, Validation loss 3.1942

Epoch 4000, Training loss 30.7525, Validation loss 3.1856

Epoch 4500, Training loss 30.1835, Validation loss 3.1772

Epoch 5000, Training loss 29.6262, Validation loss 3.1689

SGD alpha 0.001 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6356, Validation loss 3.1691

SGD alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6356, Validation loss 3.1691

Epoch 1000, Training loss 24.6520, Validation loss 3.0919

Epoch 1500, Training loss 20.6021, Validation loss 3.0243

Epoch 2000, Training loss 17.3109, Validation loss 2.9650

Epoch 2500, Training loss 14.6364, Validation loss 2.9129

Epoch 3000, Training loss 12.4629, Validation loss 2.8670

Epoch 3500, Training loss 10.6966, Validation loss 2.8266

Epoch 4000, Training loss 9.2613, Validation loss 2.7908

Epoch 4500, Training loss 8.0948, Validation loss 2.7591

Epoch 5000, Training loss 7.1469, Validation loss 2.7310

SGD alpha 0.001 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 79.9171, Validation loss 50.2310

Epoch 3, Training loss 74.8356, Validation loss 44.6451

Epoch 500, Training loss 29.6356, Validation loss 3.1691

Epoch 1000, Training loss 24.6520, Validation loss 3.0919

Epoch 1500, Training loss 20.6021, Validation loss 3.0243

Epoch 2000, Training loss 17.3109, Validation loss 2.9650

Epoch 2500, Training loss 14.6364, Validation loss 2.9129

Epoch 3000, Training loss 12.4629, Validation loss 2.8670

Epoch 3500, Training loss 10.6966, Validation loss 2.8266

Epoch 4000, Training loss 9.2613, Validation loss 2.7908

Epoch 4500, Training loss 8.0948, Validation loss 2.7591

Epoch 5000, Training loss 7.1469, Validation loss 2.7310

SGD alpha 0.01 epochs 500

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2194

Epoch 500, Training loss 7.1544, Validation loss 2.7312

SGD alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2194

Epoch 500, Training loss 7.1544, Validation loss 2.7312

Epoch 1000, Training loss 3.5517, Validation loss 2.5743

Epoch 1500, Training loss 3.1001, Validation loss 2.5225

Epoch 2000, Training loss 3.0435, Validation loss 2.5046

Epoch 2500, Training loss 3.0364, Validation loss 2.4983

Epoch 3000, Training loss 3.0355, Validation loss 2.4961

Epoch 3500, Training loss 3.0354, Validation loss 2.4953

Epoch 4000, Training loss 3.0354, Validation loss 2.4950

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

SGD alpha 0.01 epochs 5000

Epoch 1, Training loss 85.6554, Validation loss 56.5547

Epoch 2, Training loss 43.9632, Validation loss 11.2589

Epoch 3, Training loss 36.8792, Validation loss 4.2194

Epoch 500, Training loss 7.1544, Validation loss 2.7312

Epoch 1000, Training loss 3.5517, Validation loss 2.5743

Epoch 1500, Training loss 3.1001, Validation loss 2.5225

Epoch 2000, Training loss 3.0435, Validation loss 2.5046

Epoch 2500, Training loss 3.0364, Validation loss 2.4983

Epoch 3000, Training loss 3.0355, Validation loss 2.4961

Epoch 3500, Training loss 3.0354, Validation loss 2.4953

Epoch 4000, Training loss 3.0354, Validation loss 2.4950

Epoch 4500, Training loss 3.0354, Validation loss 2.4949

Epoch 5000, Training loss 3.0354, Validation loss 2.4949

df = pd.DataFrame(results)

df

| optimizer_name | learning_rate | number_of_epochs | name | w | b | train_loss | val_loss | |

|---|---|---|---|---|---|---|---|---|

| 0 | ASGD | 0.0001 | 500 | ASGD alpha 0.0001 epochs 500 | 2.245429 | 0.034534 | 35.218567 | 3.205099 |

| 1 | ASGD | 0.0001 | 5000 | ASGD alpha 0.0001 epochs 5000 | 2.584742 | -1.496300 | 29.626274 | 3.168919 |

| 2 | ASGD | 0.0001 | 5000 | ASGD alpha 0.0001 epochs 5000 | 2.584742 | -1.496300 | 29.626274 | 3.168919 |

| 3 | ASGD | 0.0010 | 500 | ASGD alpha 0.001 epochs 500 | 2.584764 | -1.496413 | 29.635824 | 3.169067 |

| 4 | ASGD | 0.0010 | 5000 | ASGD alpha 0.001 epochs 5000 | 4.279163 | -11.060122 | 7.151948 | 2.731149 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 76 | SGD | 0.0010 | 5000 | SGD alpha 0.001 epochs 5000 | 4.279835 | -11.063889 | 7.146945 | 2.731016 |

| 77 | SGD | 0.0010 | 5000 | SGD alpha 0.001 epochs 5000 | 4.279835 | -11.063889 | 7.146945 | 2.731016 |

| 78 | SGD | 0.0100 | 500 | SGD alpha 0.01 epochs 500 | 4.280899 | -11.069892 | 7.154356 | 2.731246 |

| 79 | SGD | 0.0100 | 5000 | SGD alpha 0.01 epochs 5000 | 5.377911 | -17.261759 | 3.035359 | 2.494914 |

| 80 | SGD | 0.0100 | 5000 | SGD alpha 0.01 epochs 5000 | 5.377911 | -17.261759 | 3.035359 | 2.494914 |

81 rows × 8 columns

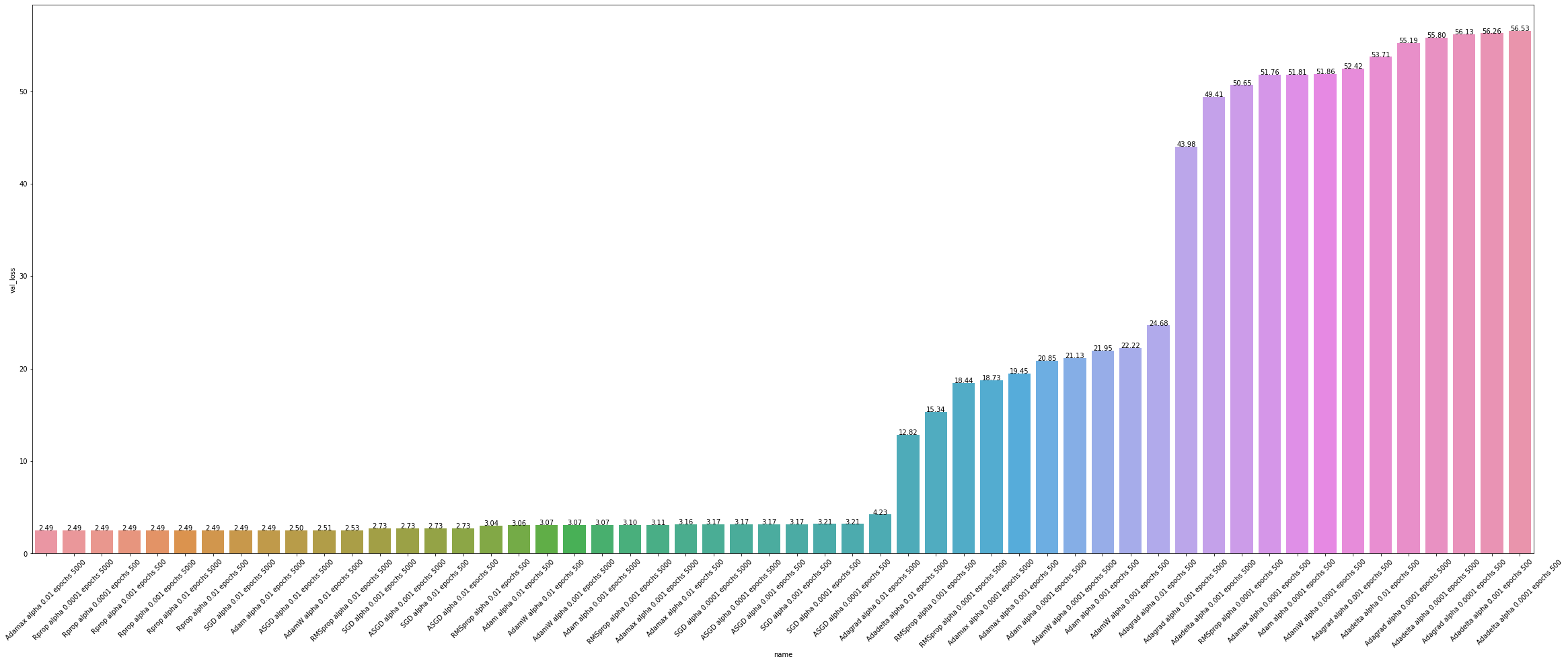

sorting our dataframe by val_loss in ascending order to see who performed the best

df = df.sort_values(by=["val_loss"])

df

| optimizer_name | learning_rate | number_of_epochs | name | w | b | train_loss | val_loss | |

|---|---|---|---|---|---|---|---|---|

| 52 | Adamax | 0.0100 | 5000 | Adamax alpha 0.01 epochs 5000 | 5.377995 | -17.262234 | 3.035359 | 2.494895 |

| 53 | Adamax | 0.0100 | 5000 | Adamax alpha 0.01 epochs 5000 | 5.377995 | -17.262234 | 3.035359 | 2.494895 |

| 65 | Rprop | 0.0001 | 5000 | Rprop alpha 0.0001 epochs 5000 | 5.377999 | -17.262249 | 3.035357 | 2.494897 |

| 63 | Rprop | 0.0001 | 500 | Rprop alpha 0.0001 epochs 500 | 5.377999 | -17.262249 | 3.035357 | 2.494897 |

| 64 | Rprop | 0.0001 | 5000 | Rprop alpha 0.0001 epochs 5000 | 5.377999 | -17.262249 | 3.035357 | 2.494897 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... |

| 11 | Adadelta | 0.0001 | 5000 | Adadelta alpha 0.0001 epochs 5000 | 1.007679 | 0.007671 | 84.997284 | 55.803486 |

| 10 | Adadelta | 0.0001 | 5000 | Adadelta alpha 0.0001 epochs 5000 | 1.007679 | 0.007671 | 84.997284 | 55.803486 |

| 18 | Adagrad | 0.0001 | 500 | Adagrad alpha 0.0001 epochs 500 | 1.004325 | 0.004324 | 85.284355 | 56.131142 |

| 12 | Adadelta | 0.0010 | 500 | Adadelta alpha 0.001 epochs 500 | 1.002963 | 0.002962 | 85.401489 | 56.264843 |

| 9 | Adadelta | 0.0001 | 500 | Adadelta alpha 0.0001 epochs 500 | 1.000295 | 0.000296 | 85.630035 | 56.525707 |

81 rows × 8 columns

def show_values_on_bars(axs):

# from https://stackoverflow.com/a/51535326

def _show_on_single_plot(ax):

for p in ax.patches:

_x = p.get_x() + p.get_width() / 2

_y = p.get_y() + p.get_height()

value = '{:.2f}'.format(p.get_height())

ax.text(_x, _y, value, ha="center")

if isinstance(axs, np.ndarray):

for idx, ax in np.ndenumerate(axs):

_show_on_single_plot(ax)

else:

_show_on_single_plot(axs)

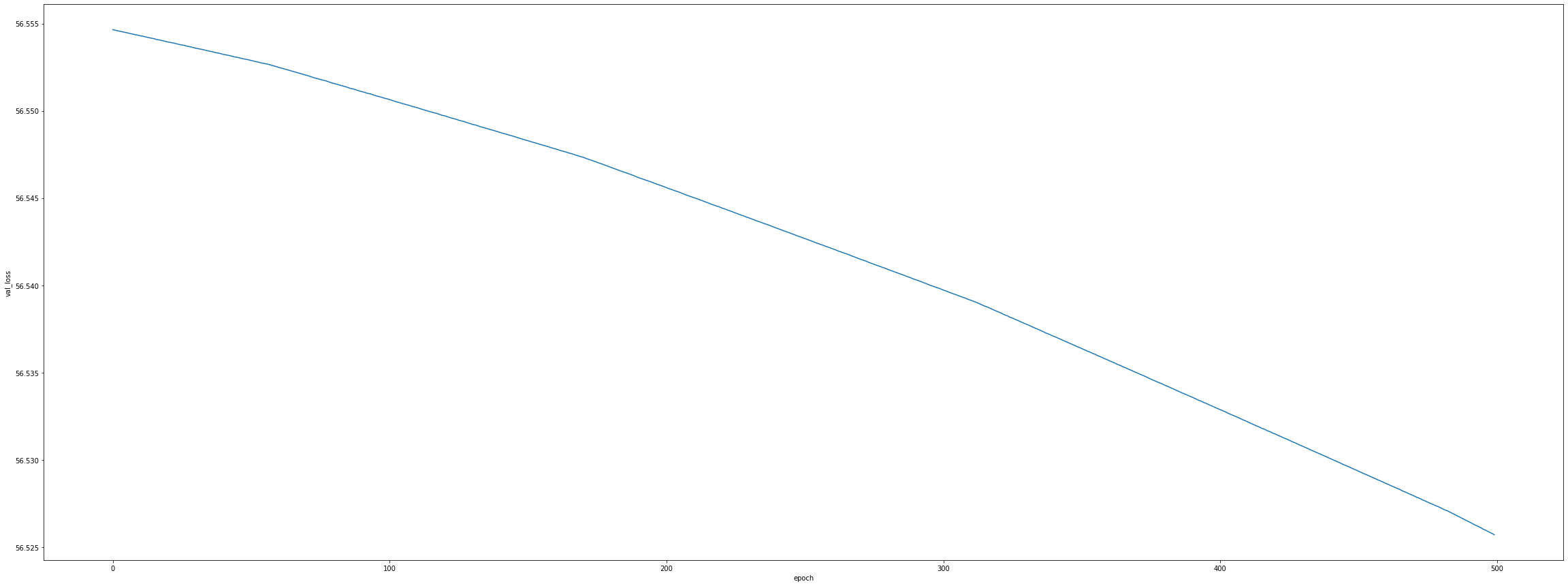

Visualizing Loss Over Time

val_loss_over_time_by_name

{'ASGD alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.904346

2 2 55.261829

3 3 54.627026

4 4 53.999836

.. ... ...

495 495 3.207940

496 496 3.207206

497 497 3.206488

498 498 3.205786

499 499 3.205099

[500 rows x 2 columns],

'ASGD alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.904346

2 2 55.261829

3 3 54.627026

4 4 53.999836

... ... ...

4995 4995 3.168985

4996 4996 3.168968

4997 4997 3.168952

4998 4998 3.168935

4999 4999 3.168919

[5000 rows x 2 columns],

'ASGD alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 50.230995

2 2 44.645107

3 3 39.711609

4 4 35.354980

.. ... ...

495 495 3.169730

496 496 3.169564

497 497 3.169399

498 498 3.169234

499 499 3.169067

[500 rows x 2 columns],

'ASGD alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 50.230995

2 2 44.645107

3 3 39.711609

4 4 35.354980

... ... ...

4995 4995 2.731359

4996 4996 2.731308

4997 4997 2.731251

4998 4998 2.731201

4999 4999 2.731149

[5000 rows x 2 columns],

'ASGD alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 11.258911

2 2 4.219460

3 3 3.260551

4 4 3.188705

.. ... ...

495 495 2.733504

496 496 2.732972

497 497 2.732441

498 498 2.731906

499 499 2.731377

[500 rows x 2 columns],

'ASGD alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 11.258911

2 2 4.219460

3 3 3.260551

4 4 3.188705

... ... ...

4995 4995 2.495191

4996 4996 2.495189

4997 4997 2.495189

4998 4998 2.495187

4999 4999 2.495189

[5000 rows x 2 columns],

'Adadelta alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.554615

2 2 56.554577

3 3 56.554543

4 4 56.554512

.. ... ...

495 495 56.526024

496 496 56.525955

497 497 56.525871

498 498 56.525791

499 499 56.525707

[500 rows x 2 columns],

'Adadelta alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.554615

2 2 56.554577

3 3 56.554543

4 4 56.554512

... ... ...

4995 4995 55.804359

4996 4996 55.804153

4997 4997 55.803925

4998 4998 55.803707

4999 4999 55.803486

[5000 rows x 2 columns],

'Adadelta alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.554329

2 2 56.554020

3 3 56.553696

4 4 56.553364

.. ... ...

495 495 56.267933

496 496 56.267162

497 497 56.266396

498 498 56.265621

499 499 56.264843

[500 rows x 2 columns],

'Adadelta alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.554329

2 2 56.554020

3 3 56.553696

4 4 56.553364

... ... ...

4995 4995 49.416130

4996 4996 49.414135

4997 4997 49.412148

4998 4998 49.410164

4999 4999 49.408180

[5000 rows x 2 columns],

'Adadelta alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.551548

2 2 56.548363

3 3 56.545120

4 4 56.541828

.. ... ...

495 495 53.743576

496 496 53.736214

497 497 53.728844

498 498 53.721470

499 499 53.714096

[500 rows x 2 columns],

'Adadelta alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.551548

2 2 56.548363

3 3 56.545120

4 4 56.541828

... ... ...

4995 4995 12.848351

4996 4996 12.842356

4997 4997 12.836356

4998 4998 12.830364

4999 4999 12.824373

[5000 rows x 2 columns],

'Adagrad alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.537872

3 3 56.532219

4 4 56.527313

.. ... ...

495 495 56.132912

496 496 56.132469

497 497 56.132030

498 498 56.131580

499 499 56.131142

[500 rows x 2 columns],

'Adagrad alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.537872

3 3 56.532219

4 4 56.527313

... ... ...

4995 4995 55.192818

4996 4996 55.192669

4997 4997 55.192543

4998 4998 55.192398

4999 4999 55.192265

[5000 rows x 2 columns],

'Adagrad alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.387157

3 3 56.330597

4 4 56.281654

.. ... ...

495 495 52.438919

496 496 52.434738

497 497 52.430573

498 498 52.426403

499 499 52.422249

[500 rows x 2 columns],

'Adagrad alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.387157

3 3 56.330597

4 4 56.281654

... ... ...

4995 4995 43.979683

4996 4996 43.978531

4997 4997 43.977379

4998 4998 43.976227

4999 4999 43.975075

[5000 rows x 2 columns],

'Adagrad alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.894497

3 3 54.342110

4 4 53.867180

.. ... ...

495 495 24.773571

496 496 24.750305

497 497 24.727089

498 498 24.703909

499 499 24.680784

[500 rows x 2 columns],

'Adagrad alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.894497

3 3 54.342110

4 4 53.867180

... ... ...

4995 4995 4.227723

4996 4996 4.227191

4997 4997 4.226661

4998 4998 4.226130

4999 4999 4.225605

[5000 rows x 2 columns],

'Adam alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.535011

3 3 56.525185

4 4 56.515358

.. ... ...

495 495 51.851482

496 496 51.842304

497 497 51.833126

498 498 51.823948

499 499 51.814774

[500 rows x 2 columns],

'Adam alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.535011

3 3 56.525185

4 4 56.515358

... ... ...

4995 4995 20.871820

4996 4996 20.866917

4997 4997 20.862013

4998 4998 20.857115

4999 4999 20.852211

[5000 rows x 2 columns],

'Adam alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.358410

3 3 56.260429

4 4 56.162548

.. ... ...

495 495 22.123474

496 496 22.078865

497 497 22.034321

498 498 21.989882

499 499 21.945518

[500 rows x 2 columns],

'Adam alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.358410

3 3 56.260429

4 4 56.162548

... ... ...

4995 4995 3.073970

4996 4996 3.073947

4997 4997 3.073922

4998 4998 3.073897

4999 4999 3.073874

[5000 rows x 2 columns],

'Adam alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.608772

3 3 53.649937

4 4 52.700714

.. ... ...

495 495 3.063385

496 496 3.062908

497 497 3.062427

498 498 3.061947

499 499 3.061470

[500 rows x 2 columns],

'Adam alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.608772

3 3 53.649937

4 4 52.700714

... ... ...

4995 4995 2.494981

4996 4996 2.494981

4997 4997 2.494981

4998 4998 2.494981

4999 4999 2.494979

[5000 rows x 2 columns],

'AdamW alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.544907

2 2 56.535187

3 3 56.525459

4 4 56.515717

.. ... ...

495 495 51.893639

496 496 51.884533

497 497 51.875420

498 498 51.866318

499 499 51.857204

[500 rows x 2 columns],

'AdamW alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.544907

2 2 56.535187

3 3 56.525459

4 4 56.515717

... ... ...

4995 4995 21.153263

4996 4996 21.148376

4997 4997 21.143486

4998 4998 21.138601

4999 4999 21.133724

[5000 rows x 2 columns],

'AdamW alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.457317

2 2 56.360092

3 3 56.262943

4 4 56.165882

.. ... ...

495 495 22.396433

496 496 22.352057

497 497 22.307760

498 498 22.263554

499 499 22.219418

[500 rows x 2 columns],

'AdamW alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.457317

2 2 56.360092

3 3 56.262943

4 4 56.165882

... ... ...

4995 4995 3.069065

4996 4996 3.069041

4997 4997 3.069020

4998 4998 3.068998

4999 4999 3.068979

[5000 rows x 2 columns],

'AdamW alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.585354

2 2 54.625298

3 3 53.674629

4 4 52.733505

.. ... ...

495 495 3.070481

496 496 3.069960

497 497 3.069438

498 498 3.068917

499 499 3.068398

[500 rows x 2 columns],

'AdamW alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.585354

2 2 54.625298

3 3 53.674629

4 4 52.733505

... ... ...

4995 4995 2.505894

4996 4996 2.505887

4997 4997 2.505881

4998 4998 2.505876

4999 4999 2.505870

[5000 rows x 2 columns],

'Adamax alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.535011

3 3 56.525185

4 4 56.515358

.. ... ...

495 495 51.798332

496 496 51.788948

497 497 51.779568

498 498 51.770184

499 499 51.760803

[500 rows x 2 columns],

'Adamax alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.544823

2 2 56.535011

3 3 56.525185

4 4 56.515358

... ... ...

4995 4995 18.747269

4996 4996 18.741966

4997 4997 18.736656

4998 4998 18.731358

4999 4999 18.726057

[5000 rows x 2 columns],

'Adamax alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.358368

3 3 56.260292

4 4 56.162277

.. ... ...

495 495 19.641920

496 496 19.592678

497 497 19.543533

498 498 19.494484

499 499 19.445547

[500 rows x 2 columns],

'Adamax alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.358368

3 3 56.260292

4 4 56.162277

... ... ...

4995 4995 3.110403

4996 4996 3.110355

4997 4997 3.110315

4998 4998 3.110270

4999 4999 3.110229

[5000 rows x 2 columns],

'Adamax alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.612324

3 3 53.660469

4 4 52.721535

.. ... ...

495 495 3.156959

496 496 3.156623

497 497 3.156288

498 498 3.155955

499 499 3.155621

[500 rows x 2 columns],

'Adamax alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.612324

3 3 53.660469

4 4 52.721535

... ... ...

4995 4995 2.494895

4996 4996 2.494895

4997 4997 2.494895

4998 4998 2.494895

4999 4999 2.494895

[5000 rows x 2 columns],

'RMSprop alpha 0.0001 epochs 500': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.386971

3 3 56.330135

4 4 56.280827

.. ... ...

495 495 50.691227

496 496 50.682026

497 497 50.672829

498 498 50.663620

499 499 50.654415

[500 rows x 2 columns],

'RMSprop alpha 0.0001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 56.456478

2 2 56.386971

3 3 56.330135

4 4 56.280827

... ... ...

4995 4995 18.459406

4996 4996 18.454260

4997 4997 18.449104

4998 4998 18.443958

4999 4999 18.438801

[5000 rows x 2 columns],

'RMSprop alpha 0.001 epochs 500': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.892769

3 3 54.337593

4 4 53.859093

.. ... ...

495 495 15.494940

496 496 15.455171

497 497 15.415486

498 498 15.375866

499 499 15.336336

[500 rows x 2 columns],

'RMSprop alpha 0.001 epochs 5000': epoch val_loss

0 0 56.554653

1 1 55.577057

2 2 54.892769

3 3 54.337593

4 4 53.859093

... ... ...

4995 4995 3.102169

4996 4996 3.098152

4997 4997 3.102068

4998 4998 3.098054

4999 4999 3.101970

[5000 rows x 2 columns],

'RMSprop alpha 0.01 epochs 500': epoch val_loss

0 0 56.554653

1 1 47.185917

2 2 41.387192

3 3 37.090027

4 4 33.661922

.. ... ...

495 495 3.038208

496 496 3.037877

497 497 3.037549

498 498 3.037217

499 499 3.036888

[500 rows x 2 columns],

'RMSprop alpha 0.01 epochs 5000': epoch val_loss

0 0 56.554653

1 1 47.185917

2 2 41.387192

3 3 37.090027

4 4 33.661922

... ... ...

4995 4995 2.530038

4996 4996 2.462016

4997 4997 2.530038

4998 4998 2.462016

4999 4999 2.530038

[5000 rows x 2 columns],